Current Product Target

The current target is a consciously scoped 1.0: four playable stages, one reliable capture/rescue loop, clean high-score/results flow, a polished cabinet-like shell, and public hosting that is trustworthy enough to share.

A readable, current map of the project: what we are building, how the code and tooling fit together, and what still matters before the polished four-stage 1.0 launch.

The high-level state of the game and release effort right now.

The current target is a consciously scoped 1.0: four playable stages, one reliable capture/rescue loop, clean high-score/results flow, a polished cabinet-like shell, and public hosting that is trustworthy enough to share.

Manual play says the core slice is healthy enough to launch if the final trust items land cleanly: capture/rescue is guarded end-to-end, the shell/control rail is much stronger, native replay is in the game, and the remaining release work is mostly production correctness plus final presentation confidence.

The center of gravity has shifted from broad gameplay tuning to visible shell, feedback, and trust polish. Stage 2 and Stage 4 tuning are still important, but they are now intentionally treated as likely 1.1 work unless new regressions appear.

Reference material, harness evidence, and real play all matter. The current rule is to prefer original/manual-backed evidence when a fidelity question exists, then use harness runs and player captures to tune what the game feels like in practice without losing architectural clarity.

What 1.0 means now that the project is prioritizing shell polish, public trust, and player-facing finish work.

Compact release-triage view for the scoped four-stage 1.0 launch after the latest reprioritization toward shell polish, trust, and player-facing finish work.

| Bucket | Issue | Owner | Status | Evidence | Next | Phase |

|---|---|---|---|---|---|---|

| #61 | Shared | Watching | Hosted Stage 3->4 can still hit the empty-playfield edge case | Keep telemetry live and treat the next reproducible bad run as launch-blocking | Phase 1 | |

| Must | #76 | Shared | Implemented on dev | Non-production lanes now use a production-score read-only mirror with local-only score saves by default | Refresh beta, confirm the read-only lane messaging and blocked submit path, then close if it holds | Phase 4 |

| Must | #85 | Shared | Open | Final release-readiness still needs security review, code/docs consistency, and a short player guide | Schedule the final release-readiness cycle once the shell/polish list is short | Phase 4 |

| Must | #86 / #103 / #105 | Codex | Active | The shell/control surface now owns guide, controls, scores, account, bug report, mute, pause, and settings | Finish and verify the compact icon rail, in-game help surfaces, and settings pause/resume behavior | Phase 3 |

| Must | #91 / #48 / #44 | Codex | Active | Top HUD, frame/bezel, and stage indicator now form one display-shell system | Tighten the cabinet-like display language and verify it across common desktop sizes | Phase 2 |

| Must | #82 / #49 / #60 | Codex | Queued | Wait-mode and score-view surfaces still need clearer, less fragile presentation | Rework score-panel layout and make scoreboard state changes more explicit | Phase 2 |

| Must | #74 / #47 / #38 / #45 | Codex | Open | Three-ship squadron readability and combat-feedback finish work still visibly affect perceived quality | Tighten squadron geometry and verify ship-loss / boss-hit feedback in beta | Phase 2 |

| Must | #87 / #106 | Codex | Open | Wait-mode/demo visual stability and between-level message presentation still read as unfinished | Stabilize attract presentation and standardize message display | Phase 2 |

| Must | #40 / #80 / #88 | Codex | Beta review | Capture/carry presentation is much healthier, but the remaining visible confidence pass still matters | Keep one more live confirmation while closing the capture presentation cluster | Phase 3 |

| Should | #71 / #78 / #77 / #73 | Codex | Low risk | Mute landed cleanly and the challenge/capture mechanic checks are mostly in confirmation territory now | Keep the mechanic checks green and close them as live confidence comes in | Phase 3 |

| Post-1.0 | #18 / #32 / #9 | Shared | Deferred | Stage 2 and Stage 4 tuning plus broader challenge-stage fidelity are now intentionally treated as 1.1 or later work | Revisit as an experimental balance/reference pass after 1.0 ships | Post-1.0 |

The main files and what they own.

`src/index.template.html` and `src/styles.css` define the page shell, settings UI, icon rail, in-game guide/controls overlays, layout, and in-game presentation. The served local development build is generated into `dist/dev/index.html` during build.

`src/js/00-boot.js` owns constants, build metadata, score/high-score state, audio, input handling, logging, and the UI state that surrounds the game loop.

`src/js/10-gameplay.js` owns stage spawning, enemy movement, scoring, dive logic, capture/rescue, carried-fighter interactions, ship loss, and the main simulation loop.

`src/js/20-render.js` owns sprite drawing, overlays, stage banners, HUD rendering, and the visual language that explains what is happening in play.

`src/js/90-harness.js` exposes deterministic setup helpers used by local Chrome harness scenarios so we can reproduce challenge, rescue, boss, and Stage 4 cases without depending only on random live play.

`tools/build/build-index.js` assembles the local dev build into `dist/dev/`, including the release dashboard, project guide, and player guide. `tools/build/promote-beta.js` and `tools/build/promote-production.js` snapshot that output into `dist/beta/` and `dist/production/`. `tools/build/check-publish-ready.js` verifies a lane is publishable from the current `HEAD`. `tools/build/publish-lane.js` publishes generated surfaces and the Pages workflow contract into `Aurora-Galactica`, where Pages should package committed artifacts rather than rebuild stale source. `tools/dev/local-resume.js` brings the local game and viewer back up together after a machine handoff.

`tools/log-viewer/` serves the local artifact review app so repaired session videos, synced event streams, Codex prompts, and issue drafting stay in one place while debugging gameplay. It reads the recursive `harness-artifacts/` tree and expects each reviewable run folder to keep a `summary.json` beside its session log and repaired review video.

What is currently measured automatically and why it matters.

The evidence sources the project treats as authoritative or useful.

The 1981 Namco manual and curated original gameplay footage are the first stop for rule questions, scoring behavior, challenge structure, capture/rescue behavior, and visible arcade moments.

Walkthroughs and written references are used carefully for later-stage breadth, progression feel, and confirming that the game experience expands beyond the first few stages, but they do not override primary sources on rules.

The project already keeps focused notes for the first challenge stage, Stage 4 fairness, manual observations, and capture/debug cases so we stop rediscovering the same lessons from scratch.

How changes are expected to move from idea to confidence.

The best entry points for different kinds of work.

Local artifact review app. Start it with `npm run log-viewer` to inspect repaired videos, synced events, and draft issues. Runs must live under `harness-artifacts/` with a `summary.json` and neighboring session/video files.

Player-facing manual used by the in-game guide icon. This is the right place for controls, HUD reading, survival tips, and capture/rescue explanations.

Quick start, local run, harness commands, and repo-level overview.

Current working plan, known problems, and active priorities.

Release milestones and what belongs to 1.0 versus later.

Explicit final-release review for 1.0 covering security posture, docs/public-surface consistency, lane discipline, and accepted launch risks.

Short system layout and how build, deploy, harness, and reference flows fit together.

Where specific gameplay systems live in code.

Current inventory of runtime, hosting, feedback, and release services, plus what stays browser-local.

How fidelity questions are grounded in durable source material and issues.

Collaborator onboarding and workflow expectations.

How to clone the dev repo on a second machine, stay in sync cleanly, and publish from either workstation.

Maintained prompt text for starting a home-machine Codex session with the correct repo roles, sync flow, and local service startup commands.

Versioning and release recommendation rules.

Visual timeline of completed, in-progress, and upcoming release steps.

What updates automatically and what should be intentionally maintained.

The most likely next moves based on current project state.

Quick-start, run/build commands, harness commands, and the repo-level overview are pulled directly from the maintained README.

Generated from README.md during build.

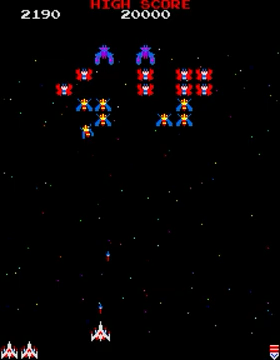

Classic fixed-screen browser shooter with keyboard controls, capture-and-rescue mechanics, multi-stage progression, and arcade-style tuning.

Current shipping target:

123 challenging stage4Expansion beyond Stage 4 is currently treated as post-1.0 work unless it directly supports polishing this slice.

Release dashboard:

https://sgwoods.github.io/Aurora-Galactica/release-dashboard.html/Users/stevenwoods/Documents/Codex-Test1/release-dashboard.jsonProject guide:

https://sgwoods.github.io/Aurora-Galactica/project-guide.html/Users/stevenwoods/Documents/Codex-Test1/project-guide.json/Users/stevenwoods/Documents/Codex-Test1/README.md/Users/stevenwoods/Documents/Codex-Test1/PLAN.md/Users/stevenwoods/Documents/Codex-Test1/PRODUCT_ROADMAP.md/Users/stevenwoods/Documents/Codex-Test1/ARCHITECTURE.md/Users/stevenwoods/Documents/Codex-Test1/SOURCE_MAP.md/Users/stevenwoods/Documents/Codex-Test1/EXTERNAL_SERVICES.md/Users/stevenwoods/Documents/Codex-Test1/REFERENCE_BASELINE.md/Users/stevenwoods/Documents/Codex-Test1/CONTRIBUTING.md/Users/stevenwoods/Documents/Codex-Test1/RELEASE_POLICY.mdPlayer guide:

https://sgwoods.github.io/Aurora-Galactica/player-guide.html/Users/stevenwoods/Documents/Codex-Test1/player-guide.json/Users/stevenwoods/Documents/Codex-Test1/dist/dev/player-guide.htmlRepository roles:

https://github.com/sgwoods/Codex-Test1https://github.com/sgwoods/Aurora-Galactica/Users/stevenwoods/Documents/Codex-Test1/EXTERNAL_SERVICES.mdAfter GitHub Pages deploys, play at:

https://sgwoods.github.io/Aurora-Galactica/https://sgwoods.github.io/Aurora-Galactica/beta/The root Aurora build is the official public production lane, even while the product is still prerelease in SemVer terms. The /beta/ lane is a manually promoted public checkpoint used for less-frequent milestone playtesting. Day-to-day engineering work continues in Codex-Test1 as the pre-production development line.

Current score/data policy:

Optional non-production test pilot configuration:

TEST_ACCOUNT_EMAILS to enable one or more specific beta/dev pilot accounts for auth and write-flow testingTEST_ACCOUNT_USER_IDS to exclude those pilots' scores from shared leaderboard views/Users/stevenwoods/Documents/Codex-Test1/.env.local.exampleMy Scores becomes available

cd /Users/stevenwoods/Documents/Codex-Test1 npm run build npm run local:resumehttp://localhost:8000http://127.0.0.1:4311/If you only want the game server, the lower-level command is:

python3 -m http.server 8000 --directory dist/devTo stop the locally tracked game and viewer services cleanly:

npm run local:stopLeft/Right or A/D: MoveCtrl: Left-handed cabinet-style move left on the webCommand: Left-handed cabinet-style move right on the webSpace: Fire (arcade-style shot cap)P or pause icon: PauseF: FullscreenU: Ultra scale toggleEnter: Start / RestartF1 or ?: Open in-game feedback formℹ icon: Open the player guide inside the game🕹 icon: Open the controls reference inside the game🎞 icon: Watch recent local replays saved on this deviceExport Log button: Download the current gameplay session as JSONsrc/index.template.htmlsrc/styles.csssrc/js/00-boot.jssrc/js/10-gameplay.jssrc/js/20-render.jssrc/js/90-harness.js/Users/stevenwoods/Documents/Codex-Test1/SOURCE_MAP.md/Users/stevenwoods/Documents/Codex-Test1/CONTRIBUTING.md/Users/stevenwoods/Documents/Codex-Test1/HOME_MACHINE_SETUP.md/Users/stevenwoods/Documents/Codex-Test1/HOME_CODEX_PROMPT.md/Users/stevenwoods/Documents/Codex-Test1/codex-skills/aurora-dev-refresh/SKILL.md/Users/stevenwoods/Documents/Codex-Test1/ARCHITECTURE.md/Users/stevenwoods/Documents/Codex-Test1/REFERENCE_BASELINE.mddist/dev/index.html npm run build/Users/stevenwoods/Documents/Codex-Test1/dist/dev//Users/stevenwoods/Documents/Codex-Test1/dist/beta//Users/stevenwoods/Documents/Codex-Test1/dist/production/dist/; treat them as disposable build output. npm run log-viewerThen open:

http://127.0.0.1:4311/The viewer loads repaired run videos, keeps the event stream aligned beside playback, supports paused zoom/pan and region clipping, and can draft Codex context or GitHub issues from the selected moment. It expects run artifacts to live under:

/Users/stevenwoods/Documents/Codex-Test1/harness-artifacts/With one summary.json per run folder and neighboring files such as:

neo-galaga-session-*.jsonneo-galaga-video-*.review.webmThe viewer discovers runs recursively, so batch folders may contain nested run folders as long as each run keeps that local structure.

beta lane with: npm run promote:betaThis creates or refreshes:

/Users/stevenwoods/Documents/Codex-Test1/dist/beta/from the current dev build in:

/Users/stevenwoods/Documents/Codex-Test1/dist/dev/Publish the generated dist/beta/ snapshot into https://github.com/sgwoods/Aurora-Galactica when you want the hosted https://sgwoods.github.io/Aurora-Galactica/beta/ lane to move.

npm run promote:productionThis creates or refreshes:

/Users/stevenwoods/Documents/Codex-Test1/dist/production/ npm run sync:publicThis updates the canonical Aurora public/status files:

/Users/stevenwoods/GitPages/public/aurora-galactica.html/Users/stevenwoods/GitPages/public/data/projects/aurora-galactica.jsonAnd it keeps the legacy compatibility aliases current:

/Users/stevenwoods/GitPages/public/codex-test1.html/Users/stevenwoods/GitPages/public/data/projects/codex-test1.jsonIt does not update /Users/stevenwoods/GitPages/public/index.html directly.

npm run verify:publictools/build/build-index.jstools/build/sync-public-pages.jstools/build/verify-public-sync.jsdist/dev/build-info.jsonrelease-notes.json/Users/stevenwoods/Documents/Codex-Test1/RELEASE_POLICY.md/Users/stevenwoods/Documents/Codex-Test1/RELEASE_READINESS_REVIEW.md/Users/stevenwoods/Documents/Codex-Test1/PRODUCT_ROADMAP.mdpost-1.0, pre-2.0 stretch goal:

/Users/stevenwoods/Documents/Codex-Test1/project-guide.json/Users/stevenwoods/Documents/Codex-Test1/dist/dev/project-guide.html/Users/stevenwoods/Documents/Codex-Test1/dist/dev/player-guide.htmlnpm run build from the guide config and the maintained docs above./Users/stevenwoods/Documents/Codex-Test1/src//Users/stevenwoods/Documents/Codex-Test1/README.md/Users/stevenwoods/Documents/Codex-Test1/PLAN.md/Users/stevenwoods/Documents/Codex-Test1/ARCHITECTURE.md/Users/stevenwoods/Documents/Codex-Test1/SOURCE_MAP.md/Users/stevenwoods/Documents/Codex-Test1/project-guide.json/Users/stevenwoods/Documents/Codex-Test1/release-dashboard.json/Users/stevenwoods/Documents/Codex-Test1/release-notes.json npm run build/Users/stevenwoods/Documents/Codex-Test1/dist/dev/index.html/Users/stevenwoods/Documents/Codex-Test1/dist/dev/project-guide.html/Users/stevenwoods/Documents/Codex-Test1/dist/dev/release-dashboard.html/Users/stevenwoods/Documents/Codex-Test1/dist/dev/build-info.json npm run local:resumehttp://localhost:8000http://127.0.0.1:4311/dist/dev/, not raw source./Users/stevenwoods/Documents/Codex-Test1/harness-artifacts/ npm run promote:beta/Users/stevenwoods/Documents/Codex-Test1/dist/beta/ npm run publish:betaHEAD automatically:dist/devdist/beta npm run promote:beta

npm run publish:check:beta

npm run publish:beta:rawsgwoods/Aurora-Galactica, copies:/Users/stevenwoods/Documents/Codex-Test1/dist/beta/into the /beta/ folder, commits, and pushes.

https://sgwoods.github.io/Aurora-Galactica/beta/ npm run approve:beta/Users/stevenwoods/Documents/Codex-Test1/dist/beta/approved-build-info.json npm run publish:productiondist/devdist/betadist/productionnpm run approve:betaAurora-Galactica Pages should package the committed production and beta artifacts directly; it should not rebuild the live production root from stale public-repo source files. npm run promote:production

npm run publish:check:production

npm run publish:production:rawsgwoods/Aurora-Galactica, copies:/Users/stevenwoods/Documents/Codex-Test1/dist/production/into the root published surface, commits, and pushes.

https://sgwoods.github.io/Aurora-Galactica/ npm run sync:publicsgwoods/public, not the playable game itself./Users/stevenwoods/Documents/Codex-Test1/release-history/.github/workflows/pages.yml.github/workflows/sync-public-pages.yml/Users/stevenwoods/Documents/Codex-Test1/reference-artifacts//Users/stevenwoods/Documents/Codex-Test1/reference-artifacts/analyses/first-challenge-stage/README.md#130 is an explicit pre-1.0 release operation.1.0 production leaderboard from a cleanbaseline rather than carrying forward pre-1.0 scores from moving tuning and rule sets.

scores table. Itdoes not delete pilot accounts.

SUPABASE_SERVICE_ROLE_KEY=... npm run leaderboard:inspect:production1.0candidate:

SUPABASE_SERVICE_ROLE_KEY=... npm run leaderboard:reset:production1.0 launch:1.0 production buildintentionally not runnable from the browser auth path.

package.json/Users/stevenwoods/Documents/Codex-Test1/release-notes.json/Users/stevenwoods/Documents/Codex-Test1/release-history/dist/production/build-info.json and release-notes.jsonPUBLIC_REPO_SYNC_TOKEN secret when availablecontents:write access to sgwoods/public0.5.0+build.9.sha.457df280.5.0-beta.1+build.9.sha.457df28.beta/Users/stevenwoods/Documents/Codex-Test1/RELEASE_POLICY.mdExport Log button🎞 replay surface is separate and keeps recent local replay state in browser storageharness-artifacts//Users/stevenwoods/Documents/Codex-Test1/ARTIFACT_POLICY.md.webm and .json artifacts into harness-artifacts//Applications/Google Chrome.app npm run harness -- --session /absolute/path/to/neo-galaga-session.json npm run harness -- --scenario stage3-challenge

npm run harness -- --scenario stage3-challenge-persona --persona expert

npm run harness -- --scenario stage3-transition

npm run harness -- --scenario stage3-perfect-transition

npm run harness -- --scenario stage4-five-ships

npm run harness -- --scenario stage4-survival

npm run harness -- --scenario stage1-descent

npm run harness -- --scenario rescue-dual

npm run harness -- --scenario capture-rescue-dual

npm run harness -- --scenario carried-boss-diving-release

npm run harness -- --scenario carried-boss-formation-hostile

npm run harness -- --scenario natural-capture-cycle

npm run harness -- --scenario stage4-capture-pressure

npm run harness -- --scenario boss-first-hit

npm run harness -- --scenario second-capture-current

npm run harness -- --scenario stage12-variety

npm run harness -- --scenario stage4-squadron-bonus

npm run harness -- --scenario carried-fighter-standby

npm run harness -- --scenario carried-fighter-attacking npm run harness:batch -- --profile personas

npm run harness:batch -- --profile distribution

npm run harness:batch -- --profile quick

npm run harness:batch -- --profile fidelity

npm run harness:batch -- --profile default

npm run harness:batch -- --profile deep/Users/stevenwoods/Documents/Codex-Test1/harness-artifacts/summary.jsonneo-galaga-session-*.jsonneo-galaga-video-*.review.webm.webm or .mkv can still be discovered, but the repaired .review.webm is the preferred review artifact. npm run harness:check:video-artifact npm run harness:repair:videossummary.json beside the generated artifacts, including:noviceadvancedexpertprofessionalstage4-capture-pressurestage4-five-ships or stage4-survival npm run harness -- --scenario stage1-opening --persona novice

npm run harness -- --scenario stage2-opening --persona advanced

npm run harness -- --scenario stage4-survival --persona expert npm run harness:batch -- --profile distribution

npm run harness:batch -- --profile distribution --repeats 4noviceadvancedexpertprofessional.webm contains audiobatch-report.json with aggregate challenge hits, ship losses, total duration, and audio failurestuning-report.json with prioritized findings to guide the next gameplay pass.review.webm artifacts and have their neighboring summary.json updated with artifact-quality metadata: npm run harness:repair:videosquick: about 1.5-2 minutesdefault: about 3-4 minutesdeep: about 5-7 minutes npm run harness:analyze -- --run /absolute/path/to/harness-artifacts/run-folder npm run harness:tune -- --batch /absolute/path/to/harness-artifacts/batch-folderharness-artifacts/ and analyze it in one step: npm run harness:import-latestharness-artifacts/ npm run harness:check-latest npm run harness:import-latest -- --session-id ngt-1773602145011-2

npm run harness:import-latest -- --source /absolute/path/to/folderharness:check-latest keeps a small local state file in harness-artifacts/ so scheduled scans can safely skip runs that were already importedThe game includes a floating Feedback button (top-right).

Feature Request and Bug Report submissions post to Web3Forms using a local build-time access keymailto: draftOne-time setup:

.env.local as WEB3FORMS_ACCESS_KEYSecond-machine setup, sync strategy, and publish workflow are documented in the dedicated home-machine guide.

Generated from HOME_MACHINE_SETUP.md during build.

This guide is the recommended way to work on Aurora Galactica from a second machine while staying in sync cleanly with the main workstation.

Use Codex-Test1 as the only development repo on both machines.

Codex-Test1Aurora-GalacticaFor a maintained first-session prompt you can paste into the home Codex instance, use:

/Users/stevenwoods/Documents/Codex-Test1/HOME_CODEX_PROMPT.mdInstall on the home machine:

gitnode and npmghOptional but useful:

git clone https://github.com/sgwoods/Codex-Test1.git

cd Codex-Test1 npm install gh auth login git pull --rebase origin main

npm install npm run build npm run local:resumehttp://localhost:8000http://127.0.0.1:4311/If you only want the game without the viewer, the lower-level command is:

python3 -m http.server 8000 --directory dist/devWhen you want to stop the locally tracked services cleanly:

npm run local:stopRun a scenario:

npm run harness -- --scenario stage1-openingRun a batch:

npm run harness:batch -- --profile quickStart by itself:

npm run log-viewerOpen:

http://127.0.0.1:4311/The viewer expects artifacts under:

/Users/stevenwoods/Documents/Codex-Test1/harness-artifacts/Player-triggered exported logs and videos are different:

Downloads folderharness-artifacts/ when you want them in the developer review archive: npm run harness:import-latestSee:

~/Documents/Codex-Test1/ARTIFACT_POLICY.mdOn the home machine that means the same repo-relative folder inside your local clone.

The simplest rule is:

Recommended pattern:

git pull --rebase origin main git add ...

git commit -m "..."

git push origin mainFor larger changes:

git checkout -b codex/my-feature

git push -u origin codex/my-featureRecommended use:

maincodex/...When a build is ready for the hosted beta lane:

npm run build

npm run promote:beta

npm run publish:check:beta

npm run publish:betaThis publishes:

/Users/stevenwoods/Documents/Codex-Test1/dist/beta/into the public Aurora beta surface.

When a build is ready for the hosted production lane:

npm run build

npm run promote:production

npm run publish:check:production

npm run publish:productionThis publishes:

/Users/stevenwoods/Documents/Codex-Test1/dist/production/into the public Aurora production surface.

src/dist/Codex-Test1 for developmentAurora-Galactica only as the release targetIf you want the least-friction two-machine workflow:

Codex-Test1 npm run build

npm run local:resumeCurrent state, known problems, active workstreams, and release-critical priorities come directly from the working plan.

Generated from PLAN.md during build.

dist/production/index.htmlpackage.json for the pre-production engineering linedist/production/build-info.jsonhttps://sgwoods.github.io/Aurora-Galactica/mailto: draftpublic repo contract:data/projects/aurora-galactica.jsondata/projects/codex-test1.json/Users/stevenwoods/Documents/Codex-Test1/reference-artifacts/ so rules and visual comparisons are not stranded in DownloadsWEB3FORMS_ACCESS_KEY for direct feedback delivery1.0 slicevisible arcade event before the four-stage release is considered complete

quick batch: /Users/stevenwoods/Documents/Codex-Test1/harness-artifacts/batch-quick-2026-03-18T20-22-44-404Zfidelity batch: /Users/stevenwoods/Documents/Codex-Test1/harness-artifacts/batch-fidelity-2026-03-18T20-26-14-680Z0 audio failures in the latest quick batch)26/40 hits (65% hit rate in the latest quick batch)4 five-ship scenario still survives the full scenario window, but the average progression is still shallow and losses skew toward collisions4 survival scenario still reaches only Stage 4, confirming later-stage progression remains limited even when survivability improves1 descent baseline is about 1.20s28px1.0 refresh on 2026-03-21 shows:23/40/Users/stevenwoods/Documents/Codex-Test1/RELEASE_POLICY.md for bump guidance/Users/stevenwoods/Documents/Codex-Test1/PRODUCT_ROADMAP.md to decide when a minor-version milestone has actually been reached/Users/stevenwoods/Documents/Codex-Test1/reference-artifacts/ as the durable home for manuals, curated clips, and analysis notes4 onward.webm + .json artifacts as the default tuning inputSubmit Run flow that packages a gameplay video and matching JSON log together#3 Synthetic user agent for headless gameplay with session replay#4 Tune Stage 1 fidelity against original Galaga reference footage#5 Add replay / watch mode for recorded sessions#6 Add gameplay session logging and export#7 Verify FormSubmit activation against Modem inbox#8 Design Submit Run flow using GitHub issues plus Google Drive artifact storage#20 Model manual-accurate captured-fighter destruction scoring#21 Add special three-ship attack squadron bonuses from the manual#22 Implement manual-accurate challenge-stage bonus scoring#23 Add Galaga-style results screen before initials entry#81 Add commentary-ready gameplay telemetry for narrated replaysThis is now the operating plan for the project.

The project is now targeting a smaller 1.0 sub-goal first:

1 through Stage 4 experienceExpansion beyond Stage 4, new theme systems, and broader content breadth are still valuable, but they are now explicitly post-1.0 work unless they are needed to support this smaller shipped slice.

1.0.0 is now live.

Current release checkpoint:

1.0.0-beta.1+build.276.sha.a59c5ad.beta1.0.0+build.276.sha.a59c5adpublish:beta -> approve:beta -> publish:productionfields

#130 is completeCurrent coding priority:

1.0.0 stable in production#44 and the broader refinement/admin/identity work into 1.xWhat changed since the last full review:

#48#85#125#61#76#79#82#106#107#108#109#112#1131.0 path:#441.0#130| Bucket | Issue | Owner | Status | Evidence | Next Action | Plan Stage |

|---|---|---|---|---|---|---|

| Can Slip | #96 / #98 | Shared | Watch / close candidate | Recorder trust and release-pipeline cleanup both improved significantly. These no longer look like primary launch blockers. | Keep the checks green and close if no new regression appears during final signoff. | Phase 4 |

| Can Slip | #103 / #105 / #110 / #114 / #115 / #116 / #117 / #119 / #120 | Shared | Post-blocker polish | The shell, pilot, popup, and replay surfaces are all much stronger now. Remaining work is polish/expansion unless a new trust bug appears. | Keep improving in 1.x unless a concrete launch issue reappears. | Phase 3 |

| Post-1.0 | #44 / #121 / #124 / #126 / #127 / #128 / #129 | Shared | Planned | Bottom-right stage indicator, shared pilot media, control-centre/admin tooling, cleaner non-production backend split, permanent pilot identity/account deletion, branded email polish, and version-aware leaderboard tracking all belong to the 1.x refinement track. | Keep them in the structured 1.x program, not the 1.0 blocker path. | Post-1.0 |

Items currently treated as post-1.0 unless they become necessary for external playtesting or operational stability:

#69 remote gameplay logs and optional video artifacts#70 homepage recent plays / watch links#121 shared authenticated pilot media and Aurora-owned YouTube publishing#17 broader reference baseline work#18 / #32 experimental Stage 2 / 4 tuning for 1.1#9 broader challenge-stage fidelity / variation work#19 later-run collision-chain regression outside the four-stage slice1.0, pre-2.0stretch goal rather than a launch requirement

/Users/stevenwoods/Documents/Codex-Test1/reference-artifacts/analyses/first-challenge-stage/README.mdAfter each material step:

4 as the end of the current 1.0 game loop1 opening fidelity2 opening pressure3 challenge-stage fidelity4 survival / fairness4 failures without breaking thestronger Stage 1-3 experience

sweeps that undo Stage 4 progress

1.0 candidate for the four-stage slice1.0 roadmap4 fairness for the four-stage 1.0 slice1.0 finishing polish once Stage 4 is good enough4 survivability and fairness so the four-stage loop feels winnable3 experience withoutdestabilizing hit rate or readability

present in the live four-stage slice, not just harness coverage

identity so the product feels intentionally shippable

four-stage 1.0 slice

breadth as post-1.0 roadmap items

Release milestones and what belongs to 1.0 versus later are pulled from the product roadmap.

Generated from PRODUCT_ROADMAP.md during build.

1.0.xpost-launch stabilizationidentity, admin, and media work into the planned 1.x track

Definition:

123 challenging stage4Quality bar:

This is the current product target. Expansion beyond Stage 4 is intentionally secondary until this slice is polished.

Target outcome:

Key issue groups:

#4 Stage 1 fidelity#9 challenge-stage fidelity#18 Stage 4 survivability#32 Stage 2 opening pressure / spacing feel#35 capture-driven life-loss accounting in self-play summaries#38 ship-hit explosion / sound / post-hit pause feel#39 repeated fighter capture behavior within a stage#14 second captured-fighter behavior researchgameplay and shows its bonus clearly during the four-stage slice

#31 release date display refinementSuggested versioning:

0.5.x-alphaExecution model:

means

without re-learning the whole project

Target outcome:

Key issue groups:

#7 FormSubmit / Modem viability#8 structured run submission#5 replay / watch mode#3 synthetic user agent / session replay work#25 daily status automation#31 release display refinements if still openSuggested versioning:

0.6.x-alphaTarget outcome:

Key issue groups:

#19 Stage 2/late-run collision chain regressions#17 stronger baseline against original Galaga#69 remote gameplay logs and optional video artifacts#70 homepage recent plays and linked run viewing#81 commentary-ready gameplay telemetry for narrated replaysincluding exact timing, composition, and scoring triggers

#26 through #30Suggested versioning:

0.7.x-alpha and beyondTarget outcome:

testing is worth the overhead

Suggested versioning:

0.8.0-beta.10.9.x-rcTarget outcome:

Suggested versioning:

1.0.0Target outcome:

Key issue groups:

Suggested versioning:

1.0.x1.1.xTarget outcome:

Key issue groups:

#111 extract a shared arcade platform for Galaga-family cabinet shootersgameDef / game-pack extractionSuggested versioning:

1.1.x1.2.xTarget outcome:

Key issue groups:

#121 shared authenticated pilot media and YouTube publishingSuggested versioning:

1.3.x1.4.x1.5.x2.0.0These are the architectural themes we should keep capturing incrementally during the v1 push so they can become the basis of a focused post-v1 platform plan.

gameDef structures#111 as the early post-1.0 umbrella for turning Aurora into a sharedarcade platform that can support Galaga variants, Galaxian, and other similar cabinet shooters

dual-fighter behavior are less entangled with the core update loop

runtime refactors

real and already helping Aurora

the four-stage slice is stable enough that theming work does not destabilize launch

catalog after 1.0, while keeping the current local-first debugging flow as the default

2.0 stretch goal only afterpilot identity, local replay, and the early post-1.0 platform seams are stable enough to support it cleanly

and other future variants without pausing product progress now

PATCH bump is enough versus when a MINOR bump is justifiedSystem layout, build flow, deploy flow, and harness boundaries come directly from the architecture notes.

Generated from ARCHITECTURE.md during build.

This document is the short technical map for how the project is organized and how work should flow through it.

/Users/stevenwoods/Documents/Codex-Test1/src/index.template.html/Users/stevenwoods/Documents/Codex-Test1/src/styles.css/Users/stevenwoods/Documents/Codex-Test1/src/js/00-boot.js/Users/stevenwoods/Documents/Codex-Test1/src/js/10-gameplay.js/Users/stevenwoods/Documents/Codex-Test1/src/js/20-render.js/Users/stevenwoods/Documents/Codex-Test1/src/js/90-harness.js/Users/stevenwoods/Documents/Codex-Test1/dist/dev/index.html/Users/stevenwoods/Documents/Codex-Test1/dist/dev/build-info.json/Users/stevenwoods/Documents/Codex-Test1/dist/production/index.html/Users/stevenwoods/Documents/Codex-Test1/dist/production/build-info.json/Users/stevenwoods/Documents/Codex-Test1/dist/beta//Users/stevenwoods/Documents/Codex-Test1/tools/build/build-index.js/Users/stevenwoods/Documents/Codex-Test1/dist/dev/index.html/Users/stevenwoods/Documents/Codex-Test1/dist/dev/build-info.json/Users/stevenwoods/Documents/Codex-Test1/tools/build/promote-production.js/Users/stevenwoods/Documents/Codex-Test1/dist/production/index.html/Users/stevenwoods/Documents/Codex-Test1/dist/production/build-info.json/Users/stevenwoods/Documents/Codex-Test1/tools/dev/local-resume.jsdist/dev game server and the log viewer together for machine handoff/debugging/Users/stevenwoods/Documents/Codex-Test1/.github/workflows/pages.ymlAurora-Galactica repo.Codex-Test1 produces generated artifacts in dist/Aurora-Galactica is the public artifact host for://beta//Users/stevenwoods/Documents/Codex-Test1/tools/build/publish-lane.js/Users/stevenwoods/Documents/Codex-Test1/tools/build/check-publish-ready.js/Users/stevenwoods/Documents/Codex-Test1/tools/build/sync-public-pages.js/Users/stevenwoods/Documents/Codex-Test1/.github/workflows/sync-public-pages.ymlsgwoods/public./Users/stevenwoods/Documents/Codex-Test1/tools/harness/run-gameplay.js/Users/stevenwoods/Documents/Codex-Test1/tools/harness/analyze-run.js/Users/stevenwoods/Documents/Codex-Test1/tools/harness/run-batch.js/Users/stevenwoods/Documents/Codex-Test1/tools/harness/tuning-report.js/Users/stevenwoods/Documents/Codex-Test1/tools/harness/scenarios//Users/stevenwoods/Documents/Codex-Test1/tools/log-viewer/server.js/Users/stevenwoods/Documents/Codex-Test1/tools/log-viewer/index.html/Users/stevenwoods/Documents/Codex-Test1/tools/log-viewer/app.js/Users/stevenwoods/Documents/Codex-Test1/tools/log-viewer/styles.css/Users/stevenwoods/Documents/Codex-Test1/harness-artifacts/summary.jsonneo-galaga-session-*.jsonneo-galaga-video-*.review.webm when availablesummary.json as the run index and then resolves the neighboring session/video files from the summary metadata..json and .webm are browser download artifacts first.json and .webm can then be imported and analyzedharness-artifacts/ folder structure and have a summary.json beside them./Users/stevenwoods/Documents/Codex-Test1/ARTIFACT_POLICY.md/Users/stevenwoods/Documents/Codex-Test1/reference-artifacts//Users/stevenwoods/Documents/Codex-Test1/reference-artifacts/manuals/galaga-1981-namco//Users/stevenwoods/Documents/Codex-Test1/reference-artifacts/walkthroughs/trueachievements-galaga/These should change carefully and usually only with reference evidence:

These are expected to change often:

The project is moving toward two parallel tracks:

That split should let collaborators work with less conflict and clearer ownership.

Shortly after 1.0, this codebase should start moving toward a shared arcade platform rather than remaining a one-off Aurora-only runtime.

Tracked umbrella:

#111 shared arcade platform extraction for Galaga-family cabinet shootersThe intended stable/shared layer is:

The intended configurable/game-pack layer is:

The goal is to reduce churn in mature infrastructure while letting future games such as Galaxian, Aurora variants, or similar fixed-screen cabinet shooters reuse the stable platform with smaller game-specific packs.

Gameplay ownership and where to edit specific systems are pulled from the source map.

Generated from SOURCE_MAP.md during build.

This file is the quick orientation guide for the current codebase. It is meant to answer "where does this behavior live?" before someone starts changing gameplay or tuning values.

/Users/stevenwoods/Documents/Codex-Test1/src/index.template.html/Users/stevenwoods/Documents/Codex-Test1/src/styles.css/Users/stevenwoods/Documents/Codex-Test1/src/js/00-boot.js/Users/stevenwoods/Documents/Codex-Test1/src/js/10-gameplay.jsbullet logic, ship loss, and the main update loop

/Users/stevenwoods/Documents/Codex-Test1/src/js/20-render.js/Users/stevenwoods/Documents/Codex-Test1/src/js/90-harness.jswindow.__galagaHarness__/Users/stevenwoods/Documents/Codex-Test1/tools/log-viewer/server.js indexes run folders under harness-artifacts/app.js synchronizes repaired videos, event streams, clips, and issue draftingsummary.json plus neighboring session/video artifacts/Users/stevenwoods/Documents/Codex-Test1/tools/build/build-index.js/Users/stevenwoods/Documents/Codex-Test1/dist/dev/index.html/Users/stevenwoods/Documents/Codex-Test1/dist/dev/build-info.json/Users/stevenwoods/Documents/Codex-Test1/tools/build/promote-beta.js/Users/stevenwoods/Documents/Codex-Test1/dist/beta//Users/stevenwoods/Documents/Codex-Test1/tools/build/promote-production.js/Users/stevenwoods/Documents/Codex-Test1/dist/production//Users/stevenwoods/Documents/Codex-Test1/tools/build/publish-lane.jsdist/beta/ or dist/production/ into sgwoods/Aurora-Galactica/Users/stevenwoods/Documents/Codex-Test1/tools/build/check-publish-ready.jsHEAD/Users/stevenwoods/Documents/Codex-Test1/tools/dev/local-resume.jsdist/dev game server and the log viewer together/Users/stevenwoods/Documents/Codex-Test1/tools/dev/local-stop.js/Users/stevenwoods/Documents/Codex-Test1/tools/build/sync-public-pages.jssgwoods/public repo/Users/stevenwoods/Documents/Codex-Test1/tools/build/sync-public-pages.jssgwoods/public repo from build metadata/Users/stevenwoods/Documents/Codex-Test1/.github/workflows/pages.yml/Users/stevenwoods/Documents/Codex-Test1/.github/workflows/sync-public-pages.ymlsgwoods/public/Users/stevenwoods/Documents/Codex-Test1/src/js/10-gameplay.jsspawnFormation()spawnChallenge()spawnStage()runStage1Script()These functions define the main board composition and stage transitions.

/Users/stevenwoods/Documents/Codex-Test1/src/js/10-gameplay.jsspawnChallenge()updateChallengeEnemy()challengeGroupBonus()Challenge stages are one of the main fidelity-sensitive systems. Manual-backed rules currently modeled:

/Users/stevenwoods/Documents/Codex-Test1/src/js/10-gameplay.jscanCapture()capturePlayer()finishCapture()destroyCarriedFighter()awardKill(...)update(...)Recent manual-backed rule work includes:

/Users/stevenwoods/Documents/Codex-Test1/src/js/10-gameplay.jsassignEscorts(...)activeEscortCount(...)awardKill(...)This is where the Stage 4+ special squadron bonus behavior now lives.

/Users/stevenwoods/Documents/Codex-Test1/src/js/10-gameplay.jsstageTune(...) in /Users/stevenwoods/Documents/Codex-Test1/src/js/00-boot.jsstageBandProfile(...) in /Users/stevenwoods/Documents/Codex-Test1/src/js/00-boot.jsspawnStage() and loseShip()updateEnemy()If Stage 4/5 feels wrong, this is usually where to look first.

/Users/stevenwoods/Documents/Codex-Test1/src/js/00-boot.jsSTAGE_BAND_PROFILESstageBandProfile(...)enemyFamilyForType(...)/Users/stevenwoods/Documents/Codex-Test1/src/js/10-gameplay.jsmakeEnemy(...)familyMotion(...)spawnStage() stage-profile logging/Users/stevenwoods/Documents/Codex-Test1/src/js/20-render.jsenemyPalette(...)FAMILY_PIXELSThis is where stage-banded family progression now lives for issues like later-stage enemy variety and future transform-style fidelity work.

/Users/stevenwoods/Documents/Codex-Test1/tools/harness/run-gameplay.js/Users/stevenwoods/Documents/Codex-Test1/tools/harness/analyze-run.js.json + .webm/Users/stevenwoods/Documents/Codex-Test1/tools/harness/tuning-report.js/Users/stevenwoods/Documents/Codex-Test1/tools/harness/scenarios/descent timing, Stage 4 pressure, and squadron bonuses

/Users/stevenwoods/Documents/Codex-Test1/harness-artifacts/summary.json as a reviewable runPrimary rule references:

/Users/stevenwoods/Documents/Codex-Test1/reference-artifacts/manuals/galaga-1981-namco/README.md/Users/stevenwoods/Documents/Codex-Test1/reference-artifacts/manuals/galaga-1981-namco/Galaga_-_1981_-_Namco.pdfSecondary progression/fidelity notes:

/Users/stevenwoods/Documents/Codex-Test1/reference-artifacts/walkthroughs/trueachievements-galaga/README.mdUse the manual first when a rule question exists. Use walkthrough/reference clips as secondary help for later-stage variety and visual comparison.

Runtime dependencies, hosting, feedback transport, and local-only systems are pulled from the external services inventory.

Generated from EXTERNAL_SERVICES.md during build.

This document is the current source of truth for external services used by Aurora Galactica, what they are used for, and what is local-only instead.

https://iddyodcknmxupavnuuwg.supabase.comine/Users/stevenwoods/Documents/Codex-Test1/src/js/05-supabase.js/Users/stevenwoods/Documents/Codex-Test1/tools/build/build-index.jshttps://api.web3forms.com/submit/Users/stevenwoods/Documents/Codex-Test1/src/js/00-boot.jshttps://sgwoods.github.io/Aurora-Galactica/https://sgwoods.github.io/Aurora-Galactica/beta//Users/stevenwoods/Documents/Codex-Test1/README.md/Users/stevenwoods/Documents/Codex-Test1/RELEASE_POLICY.mdhttps://github.com/sgwoods/Codex-Test1https://github.com/sgwoods/Aurora-Galacticagh/Users/stevenwoods/Documents/Codex-Test1/tools/build/sync-public-pages.js/Users/stevenwoods/Documents/Codex-Test1/tools/build/verify-public-sync.jsThese are not external services.

localStorageIndexedDBMediaRecorderhttp://localhost:8000http://127.0.0.1:4311/The formal distinction between browser-local replay state, browser download exports, and the canonical harness-artifacts review archive is documented in the artifact policy.

Generated from ARTIFACT_POLICY.md during build.

This project has three distinct artifact locations. Treating them as separate on purpose avoids the recurring confusion between player exports, browser-local replay state, and the developer review archive.

This is the browser-native replay feature used by a player who launches the game in-browser with no extra setup.

IndexedDB🎞 replay surfaceThis is the right default for dev, beta, and production player use because it requires no filesystem access and works inside normal browser constraints.

This is the explicit user-facing export path for logs and downloaded recordings.

neo-galaga-session-*.jsonneo-galaga-video-*.webm~/Downloads/This is the correct export destination for dev, beta, and production because the browser controls download placement. The game should not promise a repo-local path for player-triggered downloads.

This is the normalized artifact tree used by the harness, analyzer, and log viewer.

<workspace>/harness-artifacts/summary.jsonneo-galaga-session-*.jsonneo-galaga-video-*.review.webm when availableThis is the source of truth for:

<workspace>/harness-artifacts/ npm run harness:import-latest<workspace>/harness-artifacts/ on a dev machine.There is no separate filesystem export location for beta or production.

dev: replay lives in browser storage, exports go to browser downloadsbeta: replay lives in browser storage, exports go to browser downloadsproduction: replay lives in browser storage, exports go to browser downloadsdist/dev/, dist/beta/, and dist/production/ are not runtime capture archives.export.mov.png is a build snapshot artifact, not a session/replay artifact.<workspace>/harness-artifacts/.Going forward, use this distinction:

IndexedDB = native local replay feature for players<workspace>/harness-artifacts/ = canonical developer review archive after import/normalizationIf documentation or UI text blurs those boundaries, treat it as a documentation bug and correct it.

Reference priorities, baseline topics, and the evidence model are pulled directly from the fidelity baseline document.

Generated from REFERENCE_BASELINE.md during build.

This document defines how we turn original Galaga behavior into actionable work for this project.

Create a durable, repeatable baseline for:

For each fidelity topic:

digital input

stepping

stage1-descent harness scenario/Users/stevenwoods/Documents/Codex-Test1/reference-artifacts/analyses/first-challenge-stage/README.md/Users/stevenwoods/Documents/Codex-Test1/reference-artifacts/analyses/challenge-stage-reference/README.mdstage3-challengestage6-regularstage7-challengematches our present reading of the reference material for #33

/Users/stevenwoods/Documents/Codex-Test1/reference-artifacts/analyses/release-reference-pack/README.mdrescue-dualcapture-rescue-dualcarried-boss-diving-releasecarried-boss-formation-hostilesecond-capture-currentnatural-capture-cyclestage4-capture-pressureevidence to claim original-accurate timing/composition for the bonus-yielding three-fighter attack clusters seen in regular play

composition, and scoring behavior before expanding that system further

Important near-term exception:

1.0, the game should still visibly demonstrate theclassic boss-with-two-escorts special attack and show the resulting bonus in normal play, even if deeper late-game pattern research stays post-1.0

stage12-variety/Users/stevenwoods/Documents/Codex-Test1/reference-artifacts/analyses/stage4-fairness/README.md/Users/stevenwoods/Documents/Codex-Test1/reference-artifacts/analyses/release-reference-pack/README.mdstage4-five-shipsstage4-survivalsources.

/Users/stevenwoods/Documents/Codex-Test1/reference-artifacts/analyses/external-galaga5/README.mdHigh-value future baseline additions:

Current focused release pack:

/Users/stevenwoods/Documents/Codex-Test1/reference-artifacts/analyses/release-reference-pack/README.mdWhen a gameplay-fidelity issue is opened or worked, it should eventually point back to at least one entry in this document or to a durable source under:

/Users/stevenwoods/Documents/Codex-Test1/reference-artifacts/Collaborator guidance, build expectations, and review defaults are pulled from the contributing guide.

Generated from CONTRIBUTING.md during build.

This project is still in prerelease. We are optimizing for fast iteration, reference-backed fidelity work, and safe collaboration.

/Users/stevenwoods/Documents/Codex-Test1/src//Users/stevenwoods/Documents/Codex-Test1/dist/ cd /Users/stevenwoods/Documents/Codex-Test1

npm run build/Users/stevenwoods/Documents/Codex-Test1/README.md/Users/stevenwoods/Documents/Codex-Test1/SOURCE_MAP.md/Users/stevenwoods/Documents/Codex-Test1/ARCHITECTURE.md/Users/stevenwoods/Documents/Codex-Test1/REFERENCE_BASELINE.md/Users/stevenwoods/Documents/Codex-Test1/PLAN.md/Users/stevenwoods/Documents/Codex-Test1/PRODUCT_ROADMAP.mdcodex/ prefix for working branchescodex/challenge-fidelity-passcodex/stage4-collision-tuningcodex/reference-baseline-docs npm run build npm run promote:production npm run promote:beta npm run publish:check:beta

npm run publish:beta

npm run publish:check:production

npm run publish:production/Users/stevenwoods/Documents/Codex-Test1/dist/dev/index.html npm run local:resume/Users/stevenwoods/Documents/Codex-Test1/dist/beta//Users/stevenwoods/Documents/Codex-Test1/dist/production/ npm run harness -- --session /absolute/path/to/neo-galaga-session.json npm run harness -- --scenario stage3-challenge npm run harness:batch -- --profile quickIf a change touches gameplay, include at least one of:

If a change is fidelity-driven, tie it back to:

/Users/stevenwoods/Documents/Codex-Test1/reference-artifacts//Users/stevenwoods/Documents/Codex-Test1/RELEASE_POLICY.mdVersioning and release recommendation rules are pulled directly from the release policy document.

Generated from RELEASE_POLICY.md during build.

MAJOR.MINOR.PATCH-prereleaseMAJOR.MINOR.PATCHMAJOR.MINOR.PATCH-beta.<number>surface-version+build.<number>.sha.<shortcommit>.dirty1.0.0, production hotfixes should bump PATCH:1.0.11.0.2Examples:

0.5.0-alpha.1+build.115.sha.b0d812c0.5.0+build.9.sha.457df280.5.0-beta.1+build.9.sha.457df28.betaMAJOR1.x for a public-quality release where the scoped product goal isstable and shippable

1-4) rather than full long-formGalaga expansion

MINORPATCHprereleasealpha: active system building, rules changes, frequent balance changesbeta: feature-complete enough for broader testing, still tuning quality and regressionsrc: release candidate, only bug fixes and polish before a stable cutproductionhttps://sgwoods.github.io/Aurora-Galactica/1.0betahttps://sgwoods.github.io/Aurora-Galactica/beta/pre-productionhttps://github.com/sgwoods/Codex-Test1Codex-Test1Aurora-GalacticaCodex-Test1:src/npm run build/Users/stevenwoods/Documents/Codex-Test1/dist/dev/npm run local:resumenpm run promote:beta/Users/stevenwoods/Documents/Codex-Test1/dist/dev//Users/stevenwoods/Documents/Codex-Test1/dist/beta/npm run publish:check:betanpm run publish:betaAurora-Galactica so:https://sgwoods.github.io/Aurora-Galactica/beta/serves the promoted checkpoint

The beta lane is intentionally a snapshot of selected generated artifacts under dist/, not a separate branch or a second build pipeline. Codex-Test1 remains the engineering source of truth; Aurora-Galactica is the public release surface for both production and beta.

productionbetapre-productionThis is the current launch-safe answer to #76: non-production lanes no longer use the same default write path as production, even though they can still mirror production leaderboard reads.

Optional test-pilot override for non-production:

TEST_ACCOUNT_EMAILTEST_ACCOUNT_USER_IDnpm run buildnpm run publish:betanpm run approve:betanpm run publish:productionAurora-Galactica so:https://sgwoods.github.io/Aurora-Galactica/serves the promoted production build

A hotfix is a small, controlled production repair. It is not a fast path around the release process.

Goals:

Required process:

Codex-Test1 first:npm run buildthat dependency before beta if the probe is available

npm run harness:check:hotfix-smokenpm run harness:check:live-input:betanpm run publish:betaprovide an in-app refresh reminder:

npm run approve:betanpm run publish:productionProduction data rule:

minimally.

Aurora hotfix checklist:

npm run build

# run focused harness checks

npm run harness:check:hotfix-smoke

# if controls/input/overlay behavior changed

npm run harness:check:live-input:beta

# run external dependency probes when the hotfix touches them

npm run publish:beta

# manual hosted beta verification

npm run approve:beta

npm run publish:productionHotfix smoke suite contents:

node tools/harness/check-input-mapping.jsnode tools/harness/check-popup-surfaces.jsnode tools/harness/check-feedback-submit-path.jsnode tools/harness/check-remote-score-submit.js#130 is a required pre-1.0 release operation.1.0 production launch, reset the shared productionleaderboard so the official public scoreboard starts from zero.

SUPABASE_SERVICE_ROLE_KEY=... npm run leaderboard:inspect:productionSUPABASE_SERVICE_ROLE_KEY=... npm run leaderboard:reset:productionscores table.as an explicit release step, not a routine browser-side admin action.

Tracked hardening item:

#113npm run buildnpm run promote:productionnpm run sync:publicsgwoods/public project-summary pages and manifests from:/Users/stevenwoods/Documents/Codex-Test1/dist/production/build-info.json/Users/stevenwoods/Documents/Codex-Test1/release-notes.jsonThis gives every build a unique identity without forcing a SemVer bump for every commit.

PATCH when:MINOR when:alpha to betabeta to rcMAJOR only when:PATCH bump after:MINOR bump after:/Users/stevenwoods/Documents/Codex-Test1/release-history/pre-1.0beta/beta/ lane for these checkpoint builds rather than updating it on every production change1.01 through Stage 4 feel stable as one coherent game loop3 challenge stage is rewarding and readable4 endpoint is fair and beatableconsciously documented

for general external use

1.0: